Why doesn't -1 equal 1?

For the last day of Inkhaven, I was debating whether to write a retrospective post, or a piece of fiction so I could complete my prestige run:

But then I got nerdsniped by Viv’s post, I don’t care about AI, which includes a fallacious proof that 1=-1. While I identified the incorrect step fairly quickly, I couldn’t stop thinking about exactly why that step was incorrect. So that’s what I’ll be writing about today.

I am aware that the actual error is Very Much Not The Point Of The Post, but I am a slave to my passions (math).

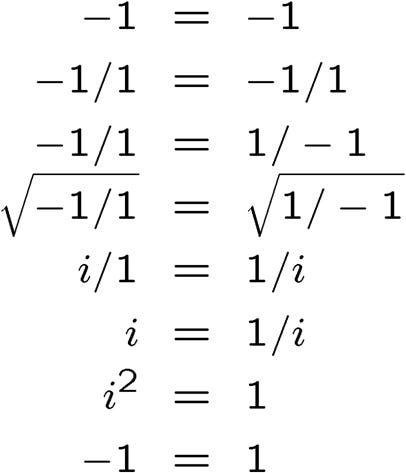

First, the proof:

(If you’d like to find the error yourself, now is the time. Note that you should not just find the incorrect step but also explain why it’s incorrect.)

To start, we will turn to a fanfiction coauthored by Eliezer Yudkowsky:

check each line and see where the error showed up first

—lintamande1, planecrash

Great! Let’s go over the steps of the proof one by one:

-1=-1 (True)

-1/1=-1/1 (-1=-1, True)

-1/1=1/-1 (-1=-1, True)

sqrt(-1/1)=sqrt(1/-1) (i=i, True)

i/1=1/i (i=-i, False)

So now we know the error is between the steps “sqrt(-1/1)=sqrt(1/-1)” and “i/1 = 1/i”.

Ok, but why is this step invalid?

On the surface, we’re just simplifying both sides of the equation. During simplification, the value of each side should not change. And indeed, the value of the left side remains i both before and after the transformation.

But the value of the right side changes from i to -i.

So we now know that in particular, the “simplification” of sqrt(1/-1) to 1/i is invalid.

But why this transformation invalid? Isn’t it a law of square roots that sqrt(a/b)=sqrt(a)/sqrt(b)?

You might be feeling a little confused right now. To help, I will prove to you that -3=3. Here we go:

-3=sqrt(9) (because -3 * -3 = 9)

3=sqrt(9) (because 3 * 3 = 9)

Therefore, -3=3

The problem with this proof, of course, is that sqrt(x) is defined to only be the positive root of x, -3 is not in fact the square root of 9.

In our case 1/i=-i, which is negative, so it is not sqrt(1/-1). The actual square root of (1/-1) is -1/i, which does in fact equal i.

The reason the transformation is so tempting is that 1/i looks positive. We’re used to the idea that when you divide two positive numbers, you get another positive number. This is true for all real numbers.

But it’s not true for imaginary numbers. That’s how imaginary numbers are defined. For any real number x, x*x is positive, which why there is no real number x such that x*x=-1, which is why sqrt(-1) must be imaginary.

Imaginary numbers are defined such that the product of two positive imaginary numbers is negative. This means the product of a positive and negative imaginary number is positive, and thus, that a positive real divided by a positive imaginary is negative.

TLDR: Step 5 is invalid because a square root cannot be negative and 1/i=-i which is negative.

For completeness, what I think of the actual thesis of Viv’s post: I can relate to being skeptical of the arguments for AI doom. I myself am skeptical that we are definitely doomed, and have written up some of my thoughts on the matter. But I take the possibility that the doomer arguments are true very seriously.

What I cannot relate to is not caring about at AI at all, or, forgive me, not “feeling the agi”. Even if superhuman AI is not going to kill us, it still seems clear to me that it’s “fucking happening”, and its impact will be like a tidal wave. To claim otherwise feels a bit to me like a claim that -1=1.

Like Viv, I will offer no arguments, only my strong instinct.

lintamande is Eliezer’s coauthor. Eliezer is “Iarwain”.

Basically i think whatever is going to happen with ai, it'll be big, but not big enough to justify me paying more than cursory attention to it instead of what I am currently paying attention to, especially given my strong dislike of and distaste for the epistemic and social norms around the idk "transformative ai community" or whatever you want to call it

No you didn't miss the point of the post at all you're using it correctly!

Sadly you have baited me into a substantive response :(

I just don't actually think anything is happening and my belief that it is not "fucking happening" is tied to strong intuitions about information density in the physical real world and what is and is not capturable by current or upcoming successful methods in AI.

I believe that superintelligence is not forbidden by physics but not that anything we're currently doing is likely to result in anything like a superintelligence, and also, there's no reason to think that just because something is permitted by physics means it will ever happen.

Conversely, I have no problem believing in doom conditional on superintelligence.

I think AI is already set to be significantly socially transformative, but in more or less a "normal" way, and not a nutso left-turn discontinuous way.